Practical introduction to AWS serverless computing using Microsoft .NET

The serverless approach is becoming more and more popular recently and there are good reasons behind it. It needs very little initial configuration, scales automatically, has high availability built in, is extremely lightweight and offers a pay-as-you-go subscription model.

A BIT OF THEORY

WHAT EXACTLY IS SERVERLESS

Serverless means that a developer does not need to care about the server on which his code is supposed to run. He just deploys his code, configures its properties – mainly its triggers. Triggers are external events that will cause the code execution. And that is it!

The Serverless term might be a bit confusing because finally there will always be some server on which the code is run. It is however abstracted from the developer as the cloud provider takes care of it.

All that means is that the programmer can really focus on the implementation of his domain requirements and does not need to spend vast part of his time on miscellaneous activities like creating a virtual machine, installing an operating system and other required dependencies, adding it to an internal network with appropriate privileges or making sure that all the latest software updates are installed.

As Amazon puts it:

Serverless is a way to describe the services, practices, and strategies that enable you to build more agile applications so you can innovate and respond to change faster. With serverless computing, infrastructure management tasks like capacity provisioning and patching are handled by AWS, so you can focus on only writing code that serves your customers. Serverless services like AWS Lambda come with automatic scaling, built-in high availability, and a pay-for-value billing model. Lambda is an event-driven compute service that enables you to run code in response to events from over 150 natively-integrated AWS and SaaS sources – all without managing any servers.

WHY SERVERLESS

A couple of years ago a common scenario of creating a cloud-based application required thinking about the infrastructure first. How many VMs should be launched? How many resources (CPU, RAM, disk) should be assigned to each of them? How to configure the network and all permissions? And on the top of that how to deliver high-availability and geo-scalability?

Such an approach not only requires a lot of additional time before the actual implementation of the project can really start. It also brings some other severe problems:

- VMs underutilization

- Rigid structure makes it difficult to respond to changes in requirements (functional and non-functional, eg. data volume increase)

- It is generally more expensive

- Environment startup time is way slower

- You need to care about OS and app runtime maintenance

AWS SERVERLESS SERVICES

AWS offers a handful of services that support the serverless approach [1]:

- Compute

- Lambda

- Fargate

- Integration

- EventBridge

- Step Functions

- Simple Queue Service

- Simple Notification Service

- API Gateway

- AppSync

- Data Store

- S3

- DynamoDB

- RDS Proxy

- Aurora Serverless

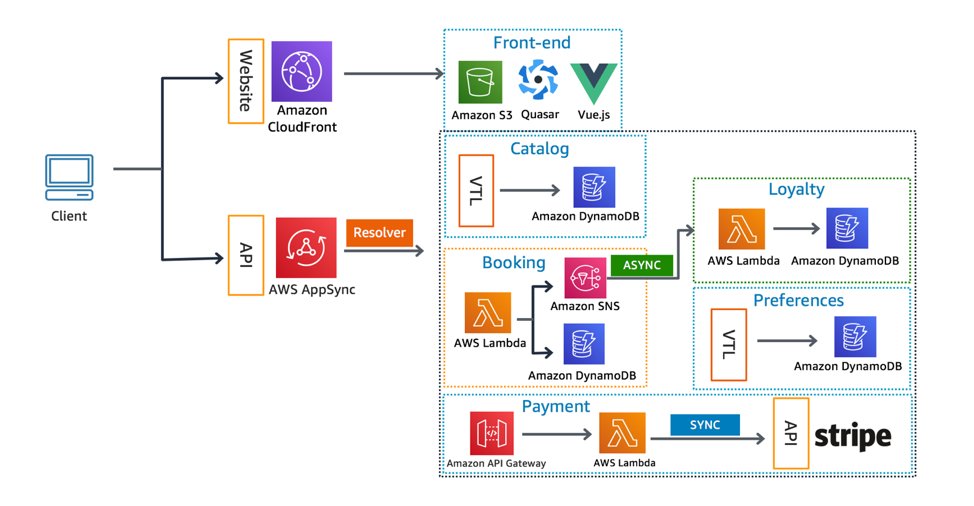

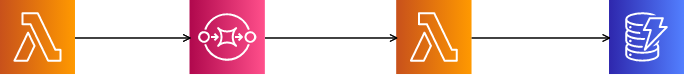

The image below shows an architecture of a sample system built with AWS serverless components:

AWS OR MAYBE AZURE?

Microsoft Azure offers the same serverless tools as AWS, although their names are different. The primary choice for .NET developers is to use the Microsoft Azure platform. These two come from the same company and integrate seamlessly. In fact some Azure components have been developed using the .NET platform. Also Azure tends to be a bit cheaper, this however strongly depends on the specific scenario and needs. Nevertheless Amazon Web Services should not be underestimated.

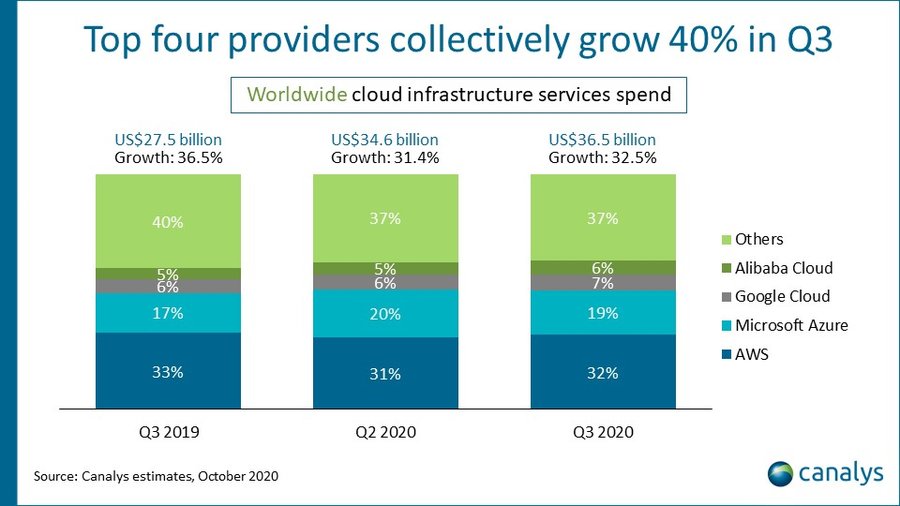

First of all it is still the most popular cloud services provider with a stable position in the market:

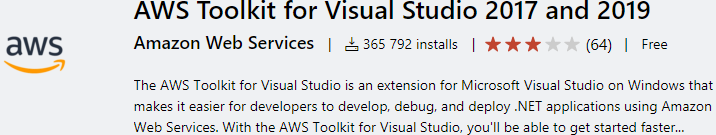

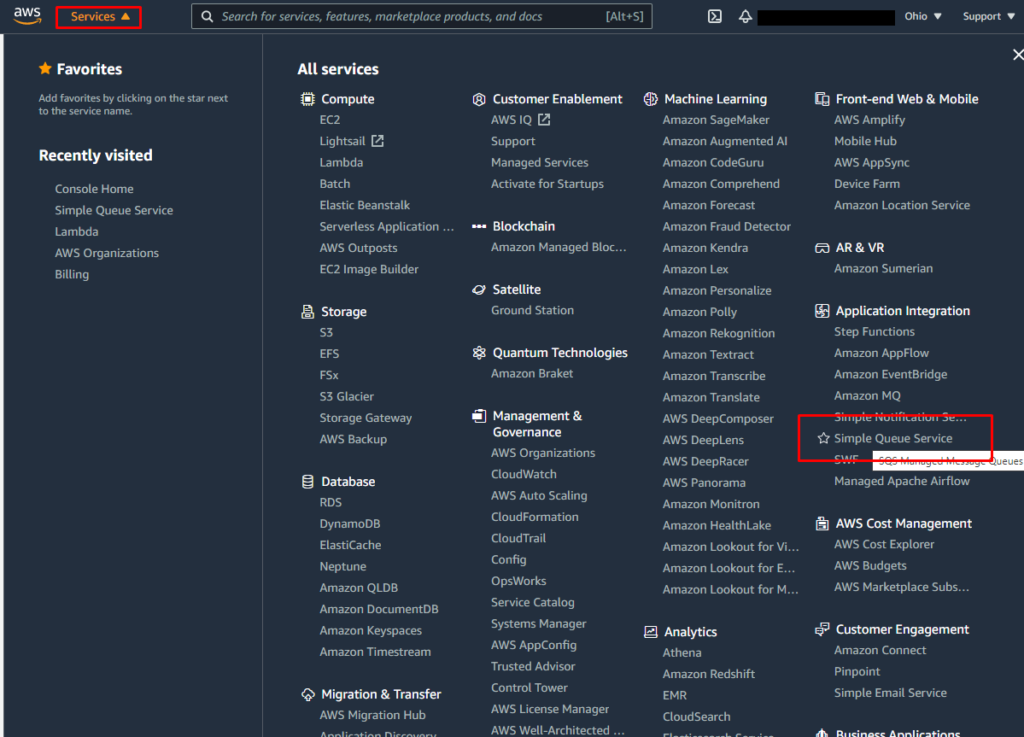

Secondly it fully supports .NET technology and offers tons of useful classes and tools including an extremely potent Visual Studio addin that we will be using in the practical part of this article:

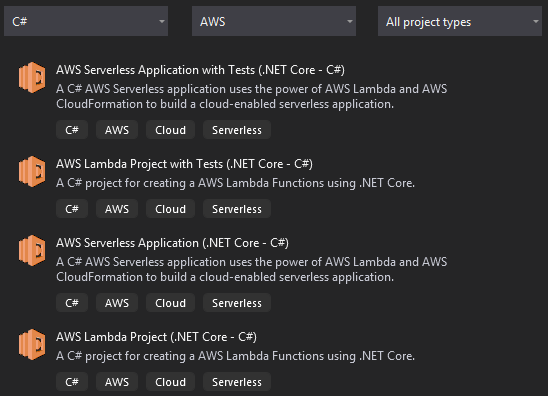

Additionally new Visual Studio project templates have been supplied:

On top of that AWS delivers tons of useful native .NET classes for accessing and manipulating its services.

TIME FOR PRACTICE

Enough theory. To demonstrate how easy and straightforward it is to get something running let’s implement a simple flow that uses a couple of serverless components:

- Lambda

AWS Lambda is a serverless compute service that lets you run code without provisioning or managing servers, creating workload-aware cluster scaling logic, maintaining event integrations, or managing runtimes. With Lambda, you can run code for virtually any type of application or backend service – all with zero administration. Just upload your code as a ZIP file or container image, and Lambda automatically and precisely allocates compute execution power and runs your code based on the incoming request or event, for any scale of traffic. You can set up your code to automatically trigger from 140 AWS services or call it directly from any web or mobile app. [3]- DynamoDB Amazon DynamoDB is a key-value and document database that delivers single-digit millisecond performance at any scale. It’s a fully managed, multi-region, multi-active, durable database with built-in security, backup and restore, and in-memory caching for internet-scale applications. DynamoDB can handle more than 10 trillion requests per day and can support peaks of more than 20 million requests per second. [4]

- SQS (Simple Queue Services) Amazon Simple Queue Service (SQS) is a fully managed message queuing service that enables you to decouple and scale microservices, distributed systems, and serverless applications. SQS eliminates the complexity and overhead associated with managing and operating message oriented middleware, and empowers developers to focus on differentiating work. Using SQS, you can send, store, and receive messages between software components at any volume, without losing messages or requiring other services to be available. [5]

The flow diagram is shown below:

The flow will start with Lambda. The purpose of this lambda is to generate a short string consisting of letters and put it into a queue (SQS) for further processing. The second lambda is to be triggered when an item arrives in the queue and will consume one at a time. Its purpose is to process an item by changing all letters to lowercase and then inserting the result string into a NOSQL database.

ACCOUNT

In order to have access to AWS components you need to have an AWS account. For the purpose of this demo you can use Free Tier account. Note that just like with Azure, you will need to have a valid credit card to complete the registration.

TOOLS

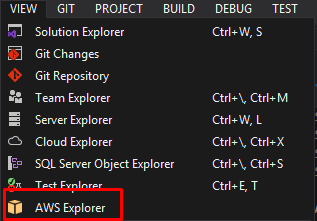

The main tool for the AWS operations is AWS Console. However in this article the AWS Explorer addin to Visual Studio will be preferred. It is more nimble and handy than the AWS Console. It has limited functionality though. If you downloaded the AWS Toolkit for Visual Studio addin you should already have it available at the top menu->View:

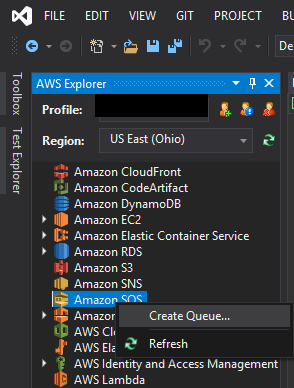

QUEUE

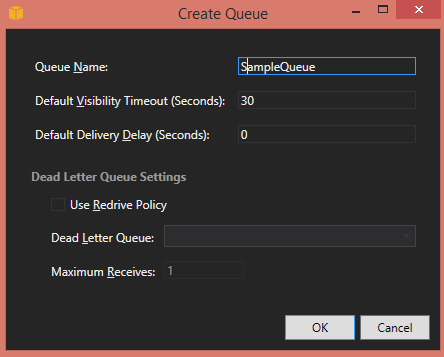

At first let’s create the queue using AWS Explorer:

It is enough to just provide a queue name:

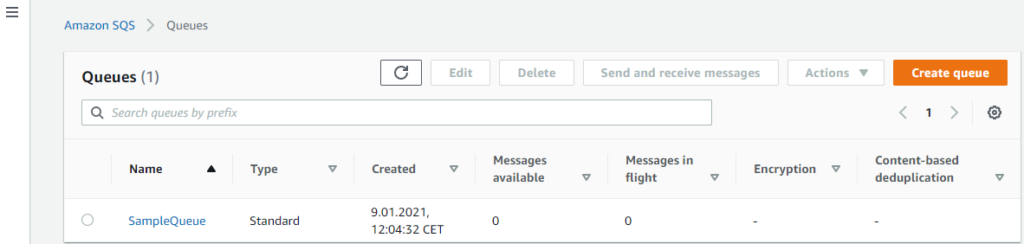

The queue should be created in almost no time. It is immediately operational. It should be visible in AWS Explorer in Visual Studio and also in AWS Console:

FIRST LAMBDA

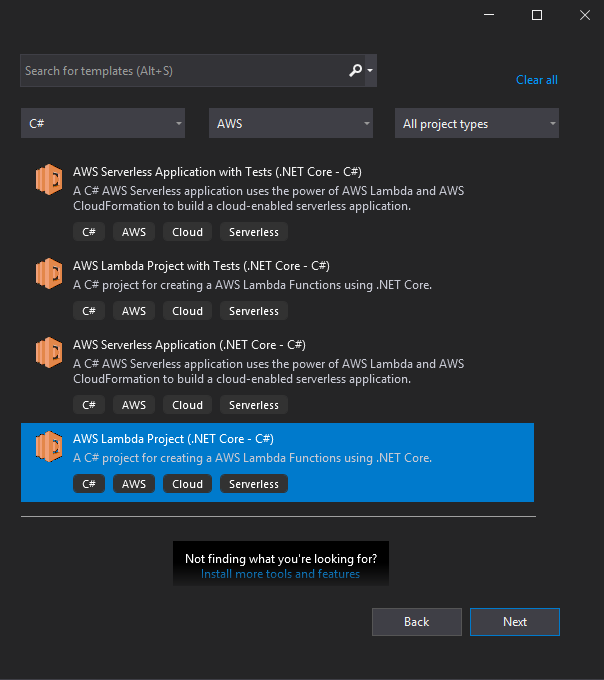

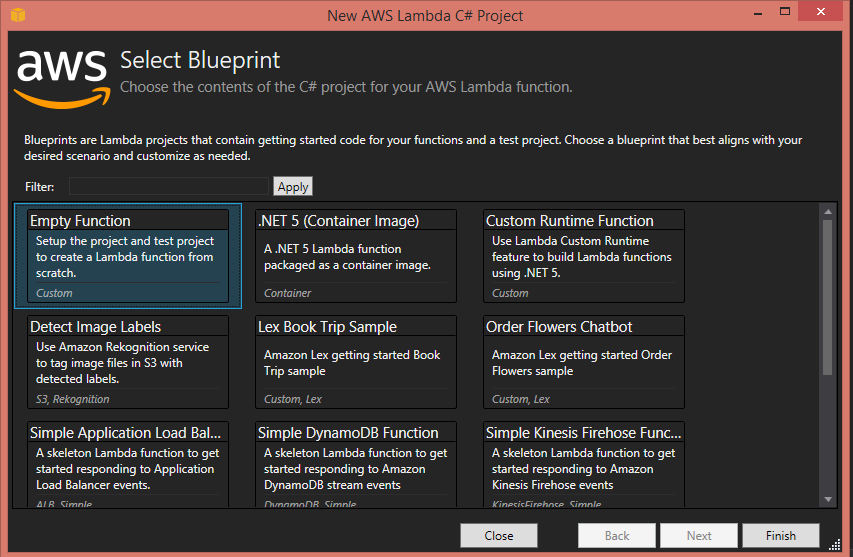

Once the queue is ready let’s create the first lambda by creating a new project in the Visual Studio using the AWS Lambda Project (.NET Core – C#) project template:

Then select Empty function blueprint

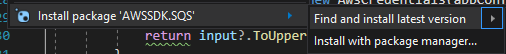

Next install the necessary package:

and use the following code snippet to define the Lambda:

using System;

using System.Collections.Generic;

using System.Linq;

using System.Threading.Tasks;

using Amazon.Lambda.Core;

using Amazon.SQS;

using Amazon.SQS.Model;

using Newtonsoft.Json;

// Assembly attribute to enable the Lambda function's JSON input to be converted into a .NET class.

[assembly: LambdaSerializer(typeof(Amazon.Lambda.Serialization.SystemTextJson.DefaultLambdaJsonSerializer))]

namespace AWSLambda2

{

public class Function

{

private readonly Random random = new Random();

private readonly int wordLength = 10;

public string RandomString(int length)

{

const string chars = "ABCDEFGHIJKLMNOPQRSTUVWXYZ";

return new string(Enumerable.Repeat(chars, length).Select(s => s[random.Next(s.Length)]).ToArray());

}

/// <summary>

/// A simple function that takes a string and does a ToUpper

/// </summary>

/// <param name="input">

/// <param name="context">

/// <returns></returns>

public async Task FunctionHandler(object input, ILambdaContext context)

{

using var sqsClient = new AmazonSQSClient(Amazon.RegionEndpoint.USEast2);

GetQueueUrlRequest urlReq = new GetQueueUrlRequest

{

QueueName = "SampleQueue"

};

var queueUrlResp = await sqsClient.GetQueueUrlAsync(urlReq);

string messageBody = JsonConvert.SerializeObject(RandomString(wordLength));

_ = await sqsClient.SendMessageAsync(queueUrlResp.QueueUrl, messageBody);

}

}

}The code is kept as simple as possible to properly demonstrate the idea. The method 'FunctionHandler’ is called every time the Lambda is executed. In the presented scenario it is going to be executed manually. The code is pretty much self-explanatory. Remember about setting the correct region and queue name.

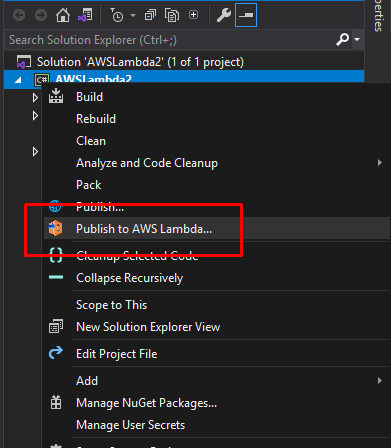

In order to deploy the code to AWS use the Publish to AWS Lambda option from Solution Explorer:

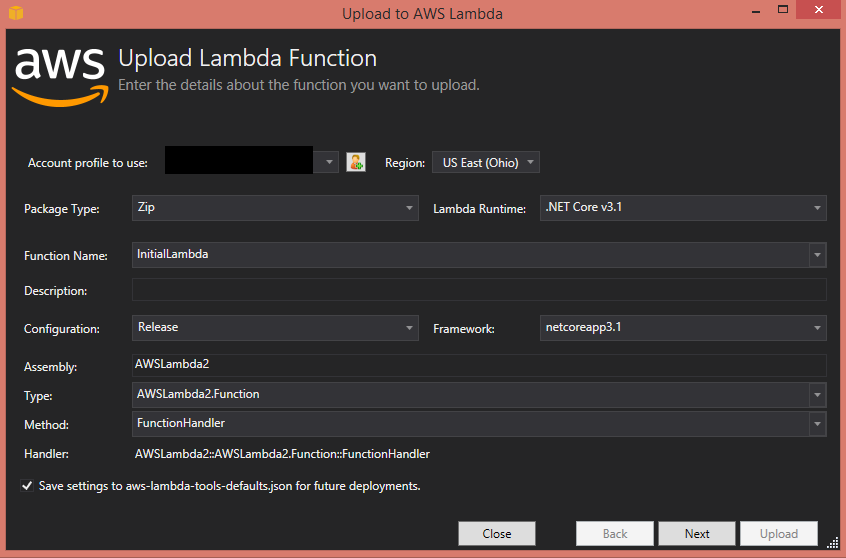

Then fill the following dialog:

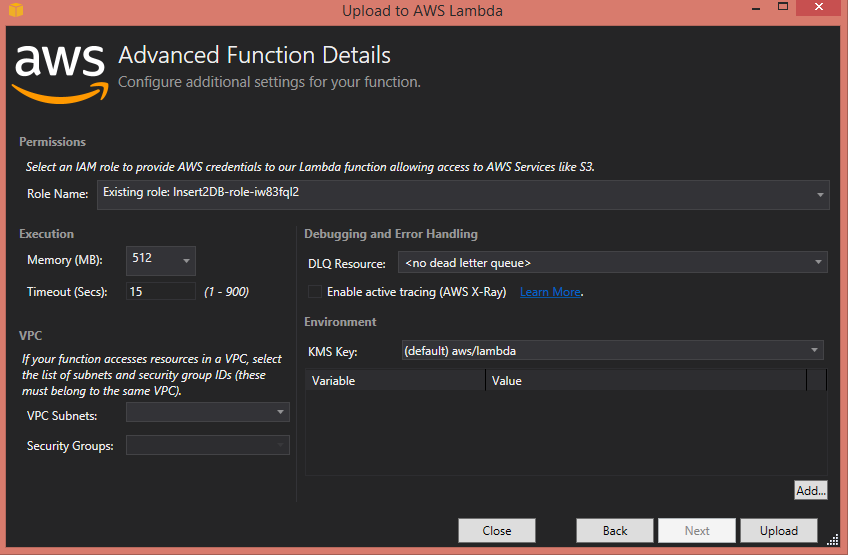

Pay attention to the field Function Name. This is the human-readable name that is displayed when you browse your Lambda functions collection. At this point it does not have to match anything. Also make sure that the Assembly field matches the name of your assembly and that the Method field correctly points to the method that is supposed to be called when this Lambda function is triggered. AWS uses FunctionHandler as the default name but nothing prevents you from using your own name. Then click Next to move to the second part of the upload dialog:

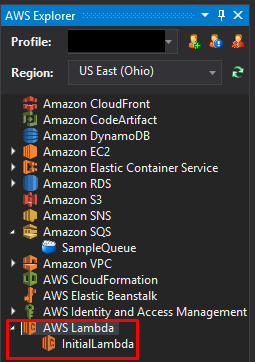

After clicking the Upload button the Visual Studio addin will attempt to upload the Lambda function to the AWS. If everything goes properly you should see the uploaded function in AWS Explorer:

CHECK IF EVERYTHING WORKS WELL SO FAR

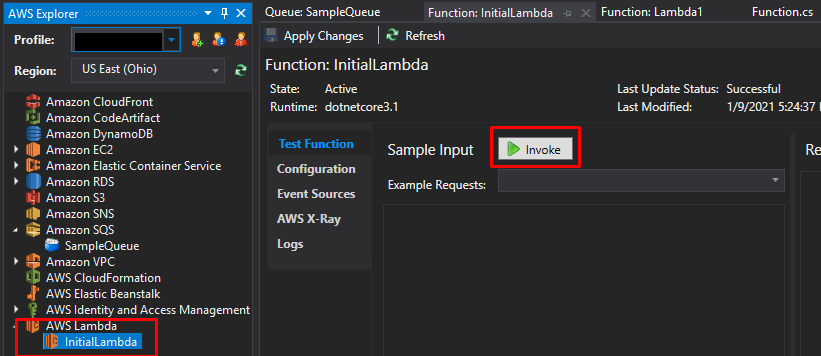

Let’s verify whether these two serverless components work and communicate properly. At this point the Lambda function is operational and should put an item into the queue once executed. Double click on InitialLambda in the AWS Explorer to open its details. Then click Invoke to manually execute the function.

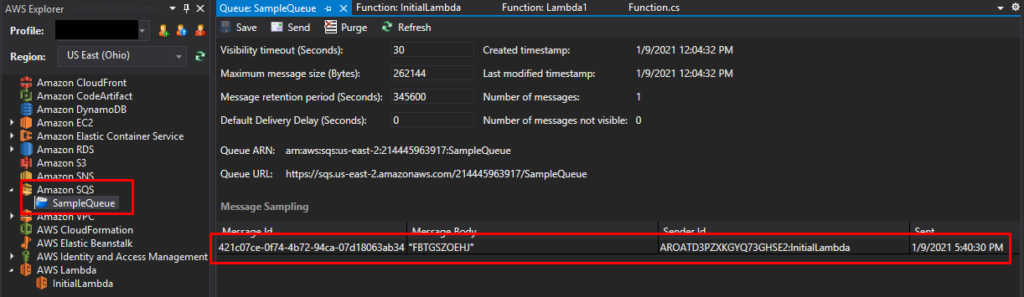

Now let’s double click on SampleQueue to check out the results:

The upper image shows that there is indeed an item in the queue. We can even see the generated string in Message Body column.

So far so good. Two of the created components are already operational and properly communicate with each other. During the next steps we will create a DynamoDB database and also build the second Lambda function that will perform a lowercase operation.

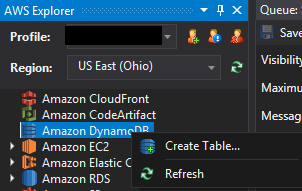

DYNAMODB

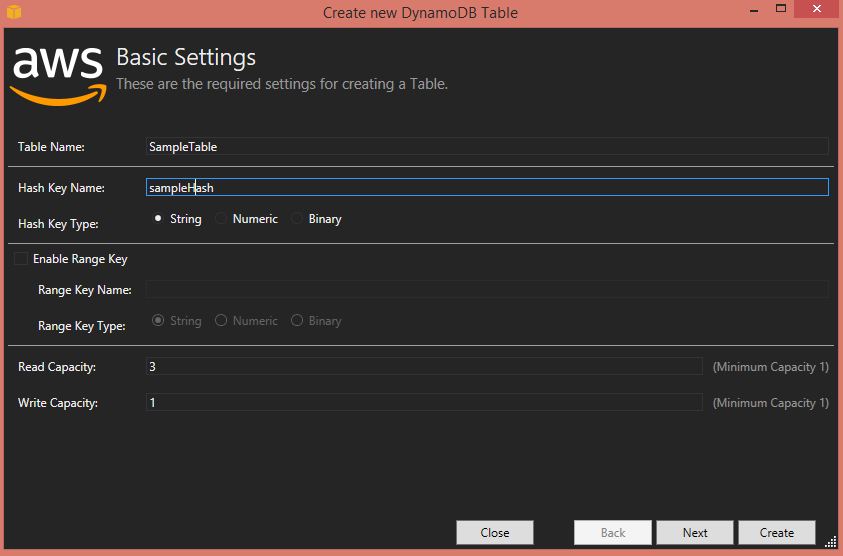

Let’s start by creating a table:

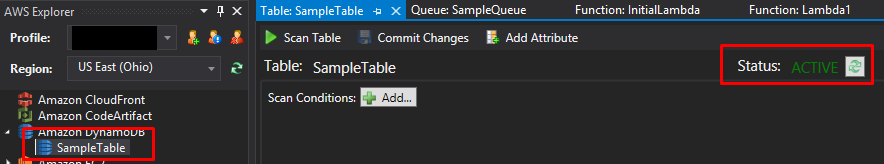

Hash Key Name is a term used to specify the identity column. The table needs a couple of seconds to be created. To verify whether everything went fine check the AWS Explorer:

This is it. Our database is ready for use.

SECOND LAMBDA

Now let’s build the last remaining component: the second Lambda function. Its purpose is to perform a lowercase operation on a string received from the queue that has been produced by the first Lambda function. The code is presented below:

using System;

using System.Collections.Generic;

using System.Linq;

using System.Threading.Tasks;

using Amazon.Lambda.Core;

using Amazon.Lambda.SQSEvents;

using Amazon.DynamoDBv2;

using Amazon.DynamoDBv2.DocumentModel;

using Amazon.Runtime;

// Assembly attribute to enable the Lambda function's JSON input to be converted into a .NET class.

[assembly: LambdaSerializer(typeof(Amazon.Lambda.Serialization.SystemTextJson.DefaultLambdaJsonSerializer))]

namespace AWSLambda1

{

public class Function

{

private static AmazonDynamoDBClient client = new AmazonDynamoDBClient();

/// <summary>

/// This method is called for every Lambda invocation. This method takes in an SQS event object and can be used

/// to respond to SQS messages.

/// </summary>

/// <param name="evnt">

/// <param name="context">

/// <returns></returns>

public async Task FunctionHandler(SQSEvent evnt, ILambdaContext context)

{

foreach (var message in evnt.Records)

{

await ProcessMessageAsync(message, context);

}

}

private async Task ProcessMessageAsync(SQSEvent.SQSMessage message, ILambdaContext context)

{

try

{

context.Logger.LogLine($"Processed message {message.Body}");

Table sampleTable = Table.LoadTable(client, "SampleTable");

var book = new Document

{

["sampleHash"] = Guid.NewGuid().ToString(),

["word"] = message.Body.ToLower()

};

await sampleTable.PutItemAsync(book);

// TODO: Do interesting work based on the new message

await Task.CompletedTask;

}

catch (AmazonDynamoDBException e) { Console.WriteLine(e.Message); }

catch (AmazonServiceException e) { Console.WriteLine(e.Message); }

catch (Exception e) { Console.WriteLine(e.Message); }

}

}

}The code is also rather self-explanatory. The database table is retrieved by its name. Then a new Document (record) is created and passed into the asynchronous PutItemAsync (insert) method.

The code is kept as simple as possible at the cost of quality. I hope it is more readable as a result.

Once the Lambda function is implemented, deploy it using the same technique as with the previous function.

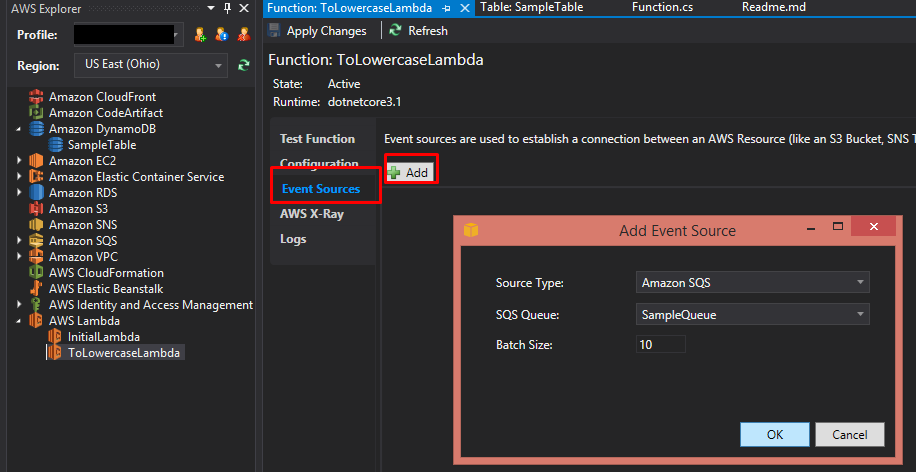

ADD EVENT SOURCING

Once the second Lambda function is ready there is one last thing to be added. We need to tell that function to execute when there is an item in the queue to be consumed. In order to do so we need to add event sourcing:

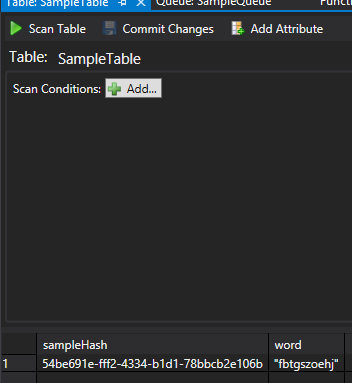

Once this relationship is added the queue item should be processed immediately. If everything went well the processed item should appear in the database:

CONCLUSION

The paper offers a short theoretical introduction to serverless computing. It described basic AWS serverless components and the main tools to create and manage them. Then it provided a practical step-by-step guide on how to build a very simple system that uses only serverless components.

As shown in the guide the serverless approach offers a very nimble and efficient way to build up a system. Built-in scalability and high availability along with an attractive pricing model make it an interesting option to consider over more traditional approaches.

REFERENCES

- https://aws.amazon.com/serverless/build-a-web-app/

- https://www.serverless.com/framework/docs/providers/aws/

- https://www.serverless.com/framework/docs/providers/aws/

- https://medium.com/serverless-transformation/what-a-typical-100-serverless-architecture-looks-like-in-aws-40f252cd0ecb

- https://aws.amazon.com/blogs/compute/icymi-serverless-q3-2020/

- https://docs.aws.amazon.com/sdk-for-net/v3/developer-guide/net-dg-config-netcore.html

- https://www.infoq.com/articles/cloud-development-aws-sdk/

Poznaj mageek of j‑labs i daj się zadziwić, jak może wyglądać praca z j‑People!

Skontaktuj się z nami