State management and project structure with Terraform

Terraform is an open-source Infrastructure as Code tool created by HashiCorp. It allows users to manage various types of IT resources (especially cloud ones) using a declarative language known as HashiCorp Configuration Language. From this article, you will learn how to manage project state and structure to simplify teamwork.

State

When running examples from previous articles, or when applying your own Terraform scripts you’ve definitely seen the terraform.tfstate file appearing in your project main folder just after applying terraform configuration. This file is nothing more than a description of binding between resources in your configuration files and “real” resources provisioned in the cloud.

When you add a new resource to your configuration and apply it, terraform updates the state file with binding between local resource configuration (module, type, name) and an instance of this resource (unique id, attributes) in the cloud. Every time you plan changes terraform synchronizes the terraform.tfstate file with real resources attributes. When you remove any resource from your configuration terraform can simply detect this change because your local configuration does not contain the resource definition present in your state. The state file is pure JSON – you can inspect or even modify it every moment.

The concept of a state file kept locally is simple and powerful enough to start playing with Terraform. In bigger projects, especially those maintained by a team of developers, this might not be enough. You could possibly keep your state file under a version control system together with all other files but this means that after every ‘terraform apply’ command the state must be published to a common repository so that other team members can use it. It is neither convenient nor safe. The solution for this will be keeping the state in a remote location, available to all developers, automatically updated by Terraform. This solution is called a remote state.

For the sake of simplicity we start with the single file module creating storage bucket:

provider "google" {

project = "terraform-sandbox-302013"

region = "europe-west3"

}

resource "google_storage_bucket" "default" {

name = "terraform-sandbox-302013-my-storage"

}

Now we will add remote state configuration to this file:

terraform {

backend "gcs"{

bucket = "terraform-sandbox-302013-tfstate"

prefix = "dev"

}

}

What does this configuration do? It tells Terraform that Google Cloud Storage gcs should be used as a backend and all state files should be kept in the bucket named terraform-sandbox-302013-tfstate in folder dev. Thanks to the prefix we can keep multiple state files in the same bucket. Unfortunately, we need to use hardcoded values in this configuration – variables cannot be used during the initialization state. Also, the bucket must exist before running the ‘terraform init’ command – Terraform does not support creating backends “on-demand”. Of course, nothing can stop us from creating this bucket using terraform script, just without a remote state.

Running terraform init, followed by terraform plan, and finally applying it proves that our configuration works correctly.

$ terraform init

Initializing the backend...

Initializing provider plugins...

- Finding latest version of hashicorp/google...

- Installing hashicorp/google v3.53.0...

- Installed hashicorp/google v3.53.0 (signed by HashiCorp)

Terraform has created a lock file .terraform.lock.hcl to record the provider

selections it made above. Include this file in your version control repository

so that Terraform can guarantee to make the same selections by default when

you run "terraform init" in the future.

Terraform has been successfully initialized!

You may now begin working with Terraform. Try running "terraform plan" to see

any changes that are required for your infrastructure. All Terraform commands

should now work.

If you ever set or change modules or backend configuration for Terraform,

rerun this command to reinitialize your working directory. If you forget, other

commands will detect it and remind you to do so if necessary.

$ terraform apply -auto-approve

google_storage_bucket.default: Creating...

google_storage_bucket.default: Creation complete after 1s [id=terraform-sandbox-302013-my-storage]

Apply complete! Resources: 1 added, 0 changed, 0 destroyed.

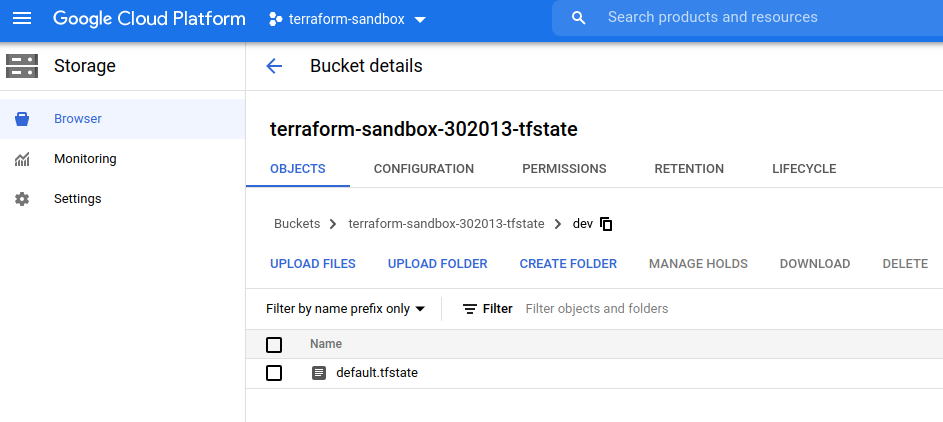

After opening the Storage browser in the web console of our project we can see, that a default.tfstate file appeared in the backend bucket.

From this point, we are ready to share our infrastructure code with teammates, without any worries about the state – Terraform will keep it up-to-date after every change.

The shared remote state is the first step to hassle-free teamwork. In the second part of this article, you will find some bits of advice on project structure and best practices that will make your work smooth.

Project Structure

Small to medium project structure

When working with small projects it might be best for you to keep everything in a single repository (I’m not even trying to convince you that using VCS is beneficial). The single repository is, in most cases, simpler to navigate and maintain but even for a small project with a limited number of resources, your activities should be focused on creating reusable modules that can reduce code duplication between different environments.

Sample project structure can look as follows:

infrastructure

|- modules

| |- network

| | |- main.tf

| | |- variables.tf

| | |- output.tf

| |- gce

| |- main.tf

| |- variables.tf

| |- output.tf

|- dev

| |- main.tf

|- stage

| |- main.tf

|- prod

|- main.tfAs you can see we are keeping modules(network, gce) as an additional folder in the central infrastructure repo and one folder for each environment (dev, stage, and prod). Why different environments are separated instead of using variables to customize between environments? There are two reasons – the first one is remote state configuration – as you remember from the previous part of this article – remote state location cannot be parametrized. The second reason is additional flexibility – we can modify dev resources, use additional modules, completely change the structure without worrying about potential production infrastructure breaks.

Medium to big projects structure

For bigger projects, it is not uncommon to have hundreds or even thousands of resources managed by Terraform. Complex solutions are provided using complex modules consisting many smaller modules. Big projects also involve more developers and more teams working within the same infrastructure. In such a case, it will be beneficial to keep modules in a separate repository. This gives you the ability to develop modules completely independently from the main infrastructure project – as long as you give your module users the ability to refer to a particular repository version (for example by using tags):

module "bucket_with_iam" {

source = "git::https://example.com/bucket_with_iam.git?ref=v1.2.0"

}Easy to use modules

Below you will find some rules, that will help you to design easy to use modules.

- Do not hardcode values within your module. It is always better to expose variables and provide default values that the user can but needn’t provide.

- Create layered modules. When creating a module that provides managed Kubernetes service – is it worth to do also a network setup there? Maybe it would be better to create a separate module with a network setup and reuse it here? Networking seems to be quite a common thing and might be reused somewhere else.

- Document your work. Tools like terraform-docs help you to generate documentation, for example in markdown format that can be shipped together with your module code.

- Test your code. Infrastructure as a Code can be tested as easily as any other type of code. It doesn’t matter if you use tools like terratest, write bash, or python scripts – tests are crucial for any resilient and reliable infrastructure.

Summary

In this article, we have learned how to use a remote state in our projects to enable teamwork. We also learned about a project structure that fits projects of all sizes.

Links

https://www.terraform.io/docs/language/state/index.html

Poznaj mageek of j‑labs i daj się zadziwić, jak może wyglądać praca z j‑People!

Skontaktuj się z nami